Pre-Requisites of Flume Project:

hadoop-2.6.0

flume-1.6.0

hbase-1.1.2

phoenix-4.7.0

java-1.7

NOTE: Make sure that install all the above components

Flume Project Download Links:

`hadoop-2.6.0.tar.gz` ==> link

`apache-flume-1.6.0-bin.tar.gz` ==> link

`hbase-1.1.2-bin.tar.gz` ==> link

`phoenix-4.7.0-HBase-1.1-bin.tar.gz` ==> link

`kalyan-json-phoenix-agent.conf` ==> link

`bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar` ==> link

`phoenix-flume-4.7.0-HBase-1.1.jar` ==> link

`json-path-2.2.0.jar` ==> link

`commons-io-2.4.jar` ==> link

-----------------------------------------------------------------------------

1. create "kalyan-json-phoenix-agent.conf" file with below content

agent.sources = EXEC

agent.channels = MemChannel

agent.sinks = PHOENIX

agent.sources.EXEC.type = exec

agent.sources.EXEC.command = tail -F /tmp/users.json

agent.sources.EXEC.channels = MemChannel

agent.sinks.PHOENIX.type = org.apache.phoenix.flume.sink.PhoenixSink

agent.sinks.PHOENIX.batchSize = 10

agent.sinks.PHOENIX.zookeeperQuorum = localhost

agent.sinks.PHOENIX.table = users2

agent.sinks.PHOENIX.ddl = CREATE TABLE IF NOT EXISTS users2 (userid BIGINT NOT NULL, username VARCHAR, password VARCHAR, email VARCHAR, country VARCHAR, state VARCHAR, city VARCHAR, dt VARCHAR NOT NULL CONSTRAINT PK PRIMARY KEY (userid, dt))

agent.sinks.PHOENIX.serializer = json

agent.sinks.PHOENIX.serializer.columnsMapping = {"userid":"userid", "username":"username", "password":"password", "email":"email", "country":"country", "state":"state", "city":"city", "dt":"dt"}

agent.sinks.PHOENIX.serializer.partialSchema = true

agent.sinks.PHOENIX.serializer.columns = userid,username,password,email,country,state,city,dt

agent.sinks.PHOENIX.channel = MemChannel

agent.channels.MemChannel.type = memory

agent.channels.MemChannel.capacity = 1000

agent.channels.MemChannel.transactionCapacity = 100

2. Copy "kalyan-json-phoenix-agent.conf" file into "$FUME_HOME/conf" folder

3. Copy "phoenix-flume-4.7.0-HBase-1.1.jar, json-path-2.2.0.jar, commons-io-2.4.jar and bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar" files into"$FLUME_HOME/lib" folder

4. Generate Large Amount of Sample JSON data follow this article.

5. Execute Below Command to Generate Sample JSON data with 100 lines. Increase this number to get more data ...

java -cp $FLUME_HOME/lib/bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar \

com.orienit.kalyan.examples.GenerateUsers \

-f /tmp/users.json \

-n 100 \

-s 1

6. Verify the Sample JSON data in Console, using below command

cat /tmp/users.json

7. To work with Flume + Phoenix Integration

Follow the below steps

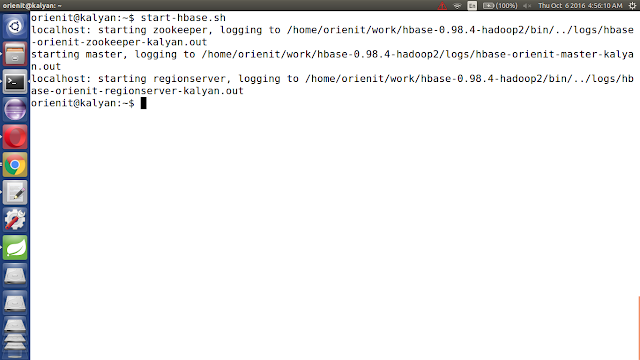

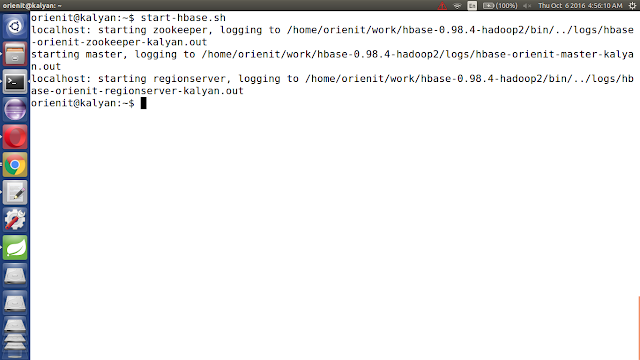

i. start the hbase using below 'start-hbase.sh' command.

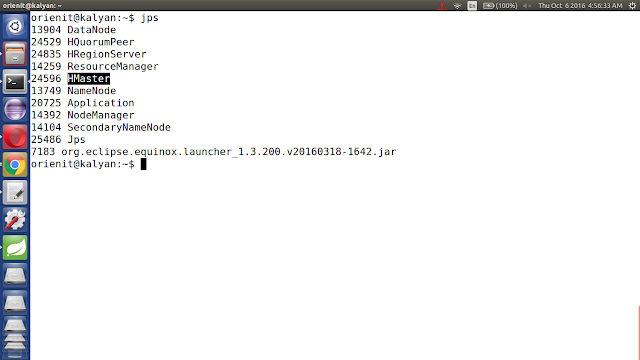

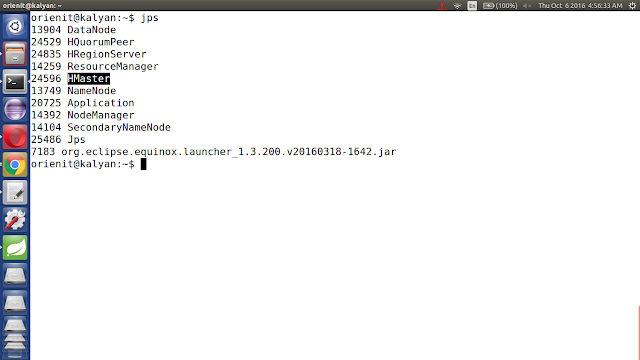

ii. verify the hbase is running or not with "jps" command

iii. Start the phoenix using below 'sqlline.py localhost' command.

iv. list out all the tables in phoenix using '!tables' command

8. Execute the below command to `Extract data from JSON data into Phoenix using Flume`

$FLUME_HOME/bin/flume-ng agent -n agent --conf $FLUME_HOME/conf -f $FLUME_HOME/conf/kalyan-json-phoenix-agent.conf -Dflume.root.logger=DEBUG,console

9. Verify the data in console

10. Verify the data in Phoenix

Execute below command to get the data from phoenix table 'users2'

!tables

select count(*) from users2;

select * from users2;

hadoop-2.6.0

flume-1.6.0

hbase-1.1.2

phoenix-4.7.0

java-1.7

NOTE: Make sure that install all the above components

Flume Project Download Links:

`hadoop-2.6.0.tar.gz` ==> link

`apache-flume-1.6.0-bin.tar.gz` ==> link

`hbase-1.1.2-bin.tar.gz` ==> link

`phoenix-4.7.0-HBase-1.1-bin.tar.gz` ==> link

`kalyan-json-phoenix-agent.conf` ==> link

`bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar` ==> link

`phoenix-flume-4.7.0-HBase-1.1.jar` ==> link

`json-path-2.2.0.jar` ==> link

`commons-io-2.4.jar` ==> link

-----------------------------------------------------------------------------

1. create "kalyan-json-phoenix-agent.conf" file with below content

agent.sources = EXEC

agent.channels = MemChannel

agent.sinks = PHOENIX

agent.sources.EXEC.type = exec

agent.sources.EXEC.command = tail -F /tmp/users.json

agent.sources.EXEC.channels = MemChannel

agent.sinks.PHOENIX.type = org.apache.phoenix.flume.sink.PhoenixSink

agent.sinks.PHOENIX.batchSize = 10

agent.sinks.PHOENIX.zookeeperQuorum = localhost

agent.sinks.PHOENIX.table = users2

agent.sinks.PHOENIX.ddl = CREATE TABLE IF NOT EXISTS users2 (userid BIGINT NOT NULL, username VARCHAR, password VARCHAR, email VARCHAR, country VARCHAR, state VARCHAR, city VARCHAR, dt VARCHAR NOT NULL CONSTRAINT PK PRIMARY KEY (userid, dt))

agent.sinks.PHOENIX.serializer = json

agent.sinks.PHOENIX.serializer.columnsMapping = {"userid":"userid", "username":"username", "password":"password", "email":"email", "country":"country", "state":"state", "city":"city", "dt":"dt"}

agent.sinks.PHOENIX.serializer.partialSchema = true

agent.sinks.PHOENIX.serializer.columns = userid,username,password,email,country,state,city,dt

agent.sinks.PHOENIX.channel = MemChannel

agent.channels.MemChannel.type = memory

agent.channels.MemChannel.capacity = 1000

agent.channels.MemChannel.transactionCapacity = 100

2. Copy "kalyan-json-phoenix-agent.conf" file into "$FUME_HOME/conf" folder

3. Copy "phoenix-flume-4.7.0-HBase-1.1.jar, json-path-2.2.0.jar, commons-io-2.4.jar and bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar" files into"$FLUME_HOME/lib" folder

4. Generate Large Amount of Sample JSON data follow this article.

5. Execute Below Command to Generate Sample JSON data with 100 lines. Increase this number to get more data ...

java -cp $FLUME_HOME/lib/bigdata-examples-0.0.1-SNAPSHOT-dependency-jars.jar \

com.orienit.kalyan.examples.GenerateUsers \

-f /tmp/users.json \

-n 100 \

-s 1

6. Verify the Sample JSON data in Console, using below command

cat /tmp/users.json

7. To work with Flume + Phoenix Integration

Follow the below steps

i. start the hbase using below 'start-hbase.sh' command.

ii. verify the hbase is running or not with "jps" command

iii. Start the phoenix using below 'sqlline.py localhost' command.

iv. list out all the tables in phoenix using '!tables' command

8. Execute the below command to `Extract data from JSON data into Phoenix using Flume`

$FLUME_HOME/bin/flume-ng agent -n agent --conf $FLUME_HOME/conf -f $FLUME_HOME/conf/kalyan-json-phoenix-agent.conf -Dflume.root.logger=DEBUG,console

9. Verify the data in console

10. Verify the data in Phoenix

Execute below command to get the data from phoenix table 'users2'

!tables

select count(*) from users2;

select * from users2;

Share this article with your friends.

Nice blog, thanks For sharing this useful article I liked this.

ReplyDeleteMBBS In Abroad

Mba In B Schools

MS In Abroad

GRE Training In Hyderabad

PTE Training In Hyderabad

Toefl Training In Hyderabad

Ielts Training In Hyderabad

Nice blog, thanks For sharing this useful article I liked this.

ReplyDeleteMBBS In Abroad

Mba In B Schools

MS In Abroad

GRE Training In Hyderabad

PTE Training In Hyderabad

Toefl Training In Hyderabad

Ielts Training In Hyderabad